modsecurity rule to filter CVE-2021-44228/LogJam/Log4Shell [update]

December 10, 2021

As a fast workaround, a friend of mine made a modsecurity rule to filter CVE-2021-44228/LogJam/Log4Shell, which he allowed me to share with you.

SecRule \

ARGS|REQUEST_HEADERS|REQUEST_URI|REQUEST_BODY|REQUEST_COOKIES|REQUEST_LINE|QUERY_STRING "jndi:ldap:" \

"phase:1, \

id:751001, \

t:none, \

deny, \

status:403, \

log, \

auditlog, \

msg:'Block: CVE-2021-44228 - deny pattern \"jndi:ldap:\"', \

severity:'5', \

rev:1, \

tag:'no_ar'"

New improved version:

SecRule \

ARGS|REQUEST_HEADERS|REQUEST_URI|REQUEST_BODY|REQUEST_COOKIES|REQUEST_LINE|QUERY_STRING "jndi:ldap:|jndi:dns:|jndi:rmi:|jndi:rni:|\${jndi:" \

"phase:1, \

id:751001, \

t:none, \

deny, \

status:403, \

log, \

auditlog, \

msg:'DVT: CVE-2021-44228 - phase 1 - deny known \"jndi:\" pattern', \

severity:'5', \

rev:1, \

tag:'no_ar'"

SecRule \

ARGS|REQUEST_HEADERS|REQUEST_URI|REQUEST_BODY|REQUEST_COOKIES|REQUEST_LINE|QUERY_STRING "jndi:ldap:|jndi:dns:|jndi:rmi:|jndi:rni:|\${jndi:" \

"phase:2, \

id:751002, \

t:none, \

deny, \

status:403, \

log, \

auditlog, \

msg:'DVT: CVE-2021-44228 - phase 2 - deny known \"jndi:\" pattern', \

severity:'5', \

rev:1, \

tag:'no_ar'

Jitsi Workaround for CVE-2021-44228/LogJam/Log4Shell

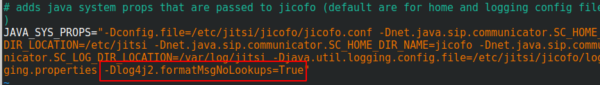

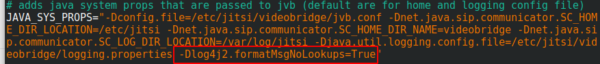

You surely heard of the LogJam / Log4Shell / CVE-2021-44228 – if not, take a look at this blog post. If you’re running Jitsi is most likely vulnerable and as there is no fix currently, you need a workaround which I provide here for you. You need to add -Dlog4j2.formatMsgNoLookups=True at the correct places in the file – the position is important.

/etc/jitsi/jicofo/config

/etc/jitsi/videobridge/config

And restart the processes or restart the server.

Proxmox Container with Debian 10 does not work after upgrade

September 8, 2019

I just did an apt update / upgrade of a Debian 10 container and restarted it afterwards and got following:

# pct start 105

Job for [email protected] failed because the control process exited with error code.

See "systemctl status [email protected]" and "journalctl -xe" for details.

command 'systemctl start pve-container@105' failed: exit code 1

with a more verbose startup I got following

# lxc-start -n 105 -F -l DEBUG -o /tmp/lxc-ID.log

lxc-start: 105: conf.c: run_buffer: 335 Script exited with status 25

lxc-start: 105: start.c: lxc_init: 861 Failed to run lxc.hook.pre-start for container "105"

lxc-start: 105: start.c: __lxc_start: 1944 Failed to initialize container "105"

lxc-start: 105: tools/lxc_start.c: main: 330 The container failed to start

lxc-start: 105: tools/lxc_start.c: main: 336 Additional information can be obtained by setting the --logfile and --logpriority options

and a look into /tmp/lxc-ID.log shows the problem:

lxc-start 105 20190908130857.595 DEBUG conf - conf.c:run_buffer:326 - Script exec /usr/share/lxc/hooks/lxc-pve-prestart-hook 105 lxc pre-start with output: unsupported debian version '10.1'

lxc-start 105 20190908130857.604 ERROR conf - conf.c:run_buffer:335 - Script exited with status 25

lxc-start 105 20190908130857.604 ERROR start - start.c:lxc_init:861 - Failed to run lxc.hook.pre-start for container "105"

The problem was that the Debian version, which changed from 10.0 to 10.1, was not recognized by the Proxmox script. The responsible code is in /usr/share/perl5/PVE/LXC/Setup/Debian.pm, but in this case I didn’t need to change anything as I just needed to update the Proxmox host to the newest minor version and it worked again, as the code in Debian.pm got changed by the developers. I just though to share this, as maybe others run into that problem, as the error reporting is not that good in that case. 🙂

Howto visualize your water meter and get alerted if too much water is used

May 1, 2019

In the village I live the water meter is replaced every 5 years and it was the fifth’s year this year. I took the opportunity to talk to the municipal office, if it was possible to get a water meter with impulse module, which I can integrate in my network. And they said yes 🙂 – Thx again!

So last week they came by and put the new one in, I was not at home, and when I came home I found following:

They also left the packaging, so I was able to guess the module. For me it looked like a “Ringkolben-Patronenzähler MODULARISRTK-OPX” from Wehrle as shown in this datasheet. I was not 100% sure if it was the S0 or M-Bus version, but a friend told me it must be the S0 Version as the M-Bus is much more expensive, so I went for it.

Getting the S0 connected

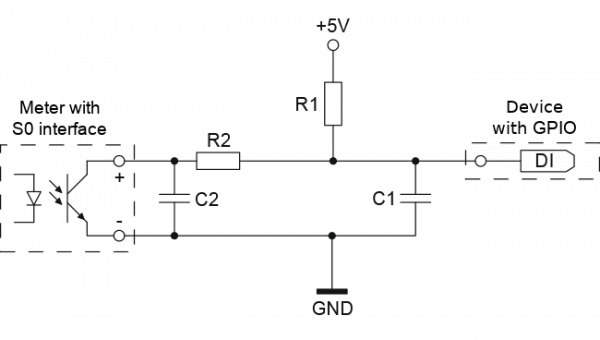

Basically the meter has an optocoupler (optoelectronic coupler) which is powered in my case by an internal battery. At every liter of water that runs through the meter, the two cables shown above get connected for a short period (e.g. 100ms). In the simplest case it would be possible to just use a pull-up resistor to 5V, but this may lead the problems. It is better to use 2 resistors and 2 capacitors stabilize the impulse and guard against unwanted effects such as electromagnetic interference. As my time when I learned that at school is too long ago, I asked a friend who does circuits all the time for help, which let to this drawing:

And he told me to use following resistors and capacitors:

- R1 – 4,7kOhm

- R2 – 470Ohm

- C1 – 100nF

- C2 – 10nF

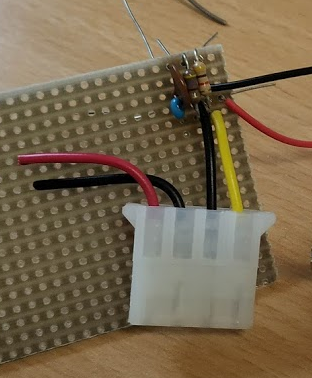

At home, I build that circuit (no fully done on the picture):

As you can see I used old PC power supply connectors to connect the water meter, so I can disconnect it easily. Hardware costs under 1 Euro so far – OK need some stuff at home already (e.g. soldering iron) 🙂

So, now back to areas I know better ….

Getting the signal onto my network

I’ve several Raspberry PIss at home and at first I thought about using one, but that would be overkill my case as I wanted to do visualization and alerting in a container on my home server anyway. I went with something Arduino like, but cheaper. 🙂

I went for a NodeMCU which has all I needed for that project:

- Digital Input with interrupt triggering –> no polling and missing an impulse

- WiFi support to connect to my IoT network

- Integration with the Arduino IDE

- It costs under 5 Euro

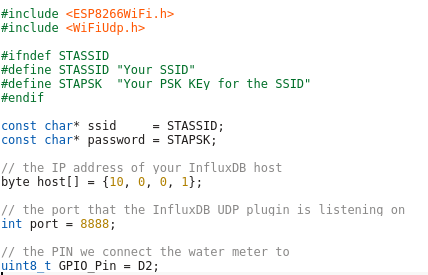

Lets take a look at my code – which you can download from here. In the first part of the code we import the needed libraries and define some variables:

- The WiFi SSID and password

- The host and port we will inform for every liter of water – We’ll use InfluxDB for that and you will see how easy that makes it.

- The PIN we connect the water meter to – make sure it supports interrupts.

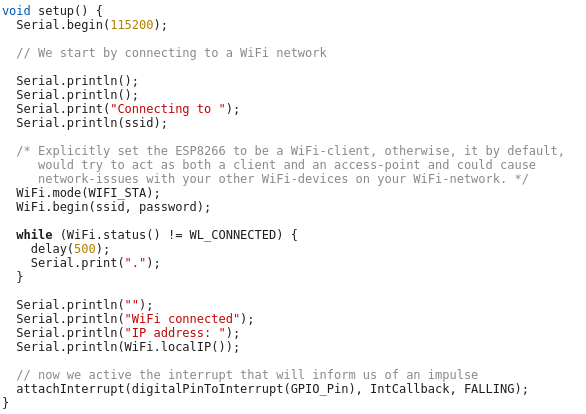

And now the code which is executed once at startup, where we connect to the Wifi and attach the interrupt.

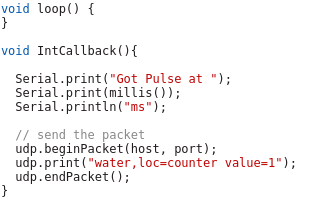

And at last we need the code that gets called by the interrupt – it just sends a UDP Message in the InfluxDB format for each Liter of water, the rest is down by the InfluxDB time series database.

As you see the code is really easy – the complicated stuff is done by the InfluxDB.

Visualization and Alerting

Sure I could write my own visualization and alerting and I have done so in the past but these times are gone. InfluxDB and some additional projects from the same guys do everything and better than I could for such a home project. You will see how easy it really is. I started with an empty LXC container on my Linux home server. I use Debian 9 in the container, but InfluxDB is packaged for all major distributions.

First we need to install curl and https support for apt – my contains are as small as possible.

# apt install curl apt-transport-https

Download the signing key for the InfluxDB repository.

# curl -sL https://repos.influxdata.com/influxdb.key | apt-key add -

This is followed by adding the repository to the list

# cat >> /etc/apt/sources.list

deb https://repos.influxdata.com/debian stretch stable

and installing the software.

# apt update

# apt-get install influxdb chronograf kapacitor

By default, the UDP interface on InfluxDB is disabled. You’ll want to modify the configuration file /etc/influxdb/influxdb.conf to look similar to this:

[[udp]]

enabled = true

bind-address = ":8888"

database = "db_iot"

Now we just need to enable the various services

# systemctl enable influxdb

# systemctl start influxdb

# systemctl enable kapacitor

# systemctl start kapacitor

If everything works you should see something like this

# netstat -lpn | grep 8888

tcp6 0 0 :::8888 :::* LISTEN 1505/chronograf

udp6 0 0 :::8888 :::* 1539/influxd

Now we just need to create the database, we configured to use for UDP:

# influx

Connected to http://localhost:8086 version 1.7.6

InfluxDB shell version: 1.7.6

Enter an InfluxQL query

> CREATE DATABASE db_iot

> exit

After this just open your browser and connect to http://<ipAddressOfServer>:8888 and fill out the form with the following details:

- Connection String: Enter the hostname or IP of the machine that InfluxDB is running on, and be sure to include InfluxDB’s default port 8086. In my/our case it is localhost / 127.0.0.1

- Connection Name: Enter a name for your connection string.

- Username and Password: These fields can remain blank unless you’ve enabled authorization in InfluxDB.

- Telegraf Database Name: Optionally, enter a name for your Telegraf database. The default name is Telegraf.

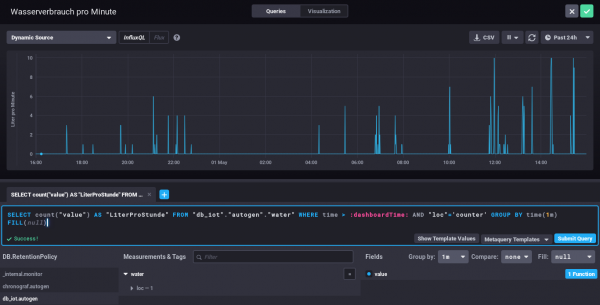

Everything else can be done via the browser – Just take a look at the configuration of one of my dashboard elements – the SQL code is written by clicking around :-).

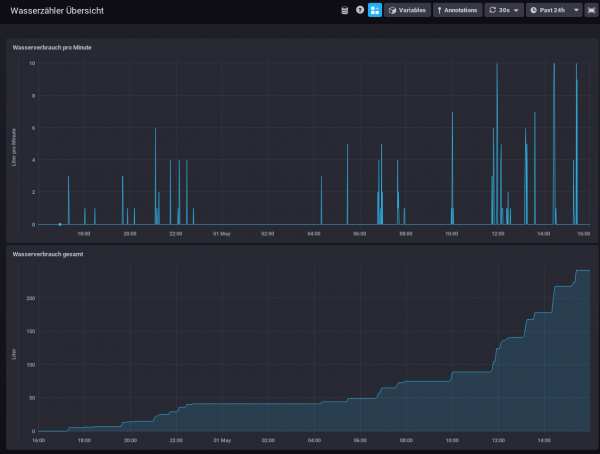

My water meter dashboard looks currently like this:

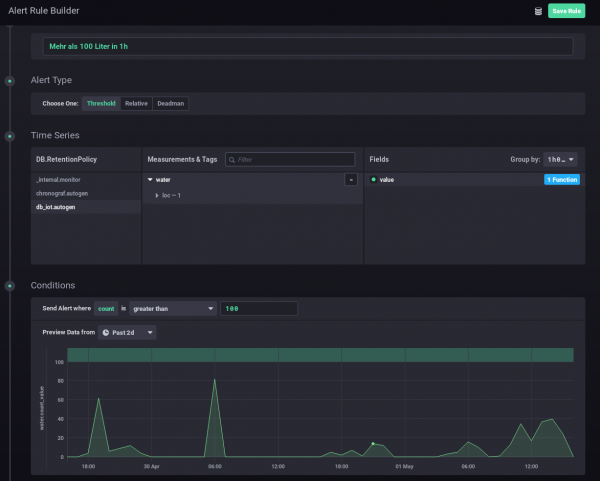

And you can also define alerts. In this case I wanted to get an alert message send, if more than 100 Liter of water is used in one hour – I should know if that happens and if it is OK.

I hope you see how easy visualizing and alerting a water meter can be. It is also really cheap – about 5 Euro for everything, if you’ve already a server otherwise let it run on a Raspberry PI (about 30 Euro), rent a virtual server for 1-2 Euro/month or use the container feature of your NAS.

Howto install Wireguard in an unprivileged container (Proxmox)

April 14, 2019

Wireguard is the new star on the block concerning VPNs – and yes it has some benefits to the old VPN technologies but I won’t talk about them as there is much information about that on the Internet. This blog post just explains how to set it up in an unprivileged container. In my case everything is done on a Proxmox server. Let’s start:

On the Proxmox host itself we need to get the kernel module running. As Proxmox is based on Debian we just pin the Wireguard package from unstable, which is the recommended way by the Debian project in this case.

echo "deb http://deb.debian.org/debian/ unstable main" > /etc/apt/sources.list.d/unstable-wireguard.list

printf 'Package: *\nPin: release a=unstable\nPin-Priority: 90\n' > /etc/apt/preferences.d/limit-unstable

apt update

apt install wireguard pve-headers

If you get following:

Loading new wireguard-0.0.20190406 DKMS files...

Building for 4.15.18-9-pve

Module build for kernel 4.15.18-9-pve was skipped since the

kernel headers for this kernel does not seem to be installed.

Setting up linux-headers-4.9.0-8-amd64 (4.9.144-3.1) ...

you need to make sure the pve-headers for your current kernel is installed. If you installed it later, then you need to call:

dkms autoinstall

In both cases we test it with:

modprobe wireguard

If this works, we auto-load the module at boot, as the host does not know that a container needs that module later.

echo "wireguard" >> /etc/modules-load.d/modules.conf

Now we create our unprivileged container (in my case also Debian 9) and then install the user space tools:

echo "deb http://deb.debian.org/debian/ unstable main" > /etc/apt/sources.list.d/unstable-wireguard.list

printf 'Package: *\nPin: release a=unstable\nPin-Priority: 90\n' > /etc/apt/preferences.d/limit-unstable

apt update

and now something special – we want only the user space tools nothing more.

apt-get install --no-install-recommends wireguard-tools

A simple test that everything works can be done by creating temporary a wg0 device.

ip link add wg0 type wireguard

No output means everything worked. And we’re done, everything else is the same as running Wireguard without container – just choose your howto for this.

Howto install Bitwarden in a LXC container (e.g. Proxmox)

January 13, 2019

As many of you know me, I’m quite serious about security and therefore a believer in the theory that a service which is not reachable (e.g. from the Internet) cannot be attacked as easily as one that it. Looking at password managers this makes choosing not that easy. Sure there is Keepass and the descendants, but they have the problem that the security is based solely on the master password and the end device security. Knowing friends that use Google Drive for syncing the password file between their devices, I looked at that option, but it was not right for me (e.g. Browser integration, 2FA, …).

Password managers like Lastpass or 1Password are also not the right solution for me. Yes, I believe that their crypto is good, and they never see the passwords of their users, but the 2FA is only as good as the lost password/2FA reset feature is. I’ve read and seen to many attacks on that to rely on it.

All of this leads to Bitwarden, it provides the same level of functionality as Lastpass or 1Password but is OpenSource and can be hosted on my own server. Not opening it up to Internet and using it from remote only via VPN (which I have anyway) make for a real small attack surface. This blog post shows how I installed it within a Proxmox LXC container, which I did to isolated it from other stuff and therefore there are no dependencies, if I need to upgrade something. I don’t like to install anything on the Proxmox host itself. As this is my first try, and I run into a problem with an unprivileged container and docker within it, this setup works currently only with a privileged container. I know this is not that good, but in this case it is a risk I can accept. If you find a solution to get it running in an unprivileged container please send me an email or write a comment.

LXC container

After creating the LXC container (2Gb RAM, >5GB HD) with Debian 9, don’t start the container at once. You need to add following to /etc/modules-load.d/modules.conf

aufs

overlay

And if you don’t want to boot load the modules with

modprobe aufs

modprobe overlay

If you don’t do this your installation will get gigantic (over 30gb). Now we just need to add following to /etc/pve/lxc/<vid>.conf

#insert docker part below

lxc.apparmor.profile: unconfined

lxc.cgroup.devices.allow: a

lxc.cap.drop:

Now you can start the container and enter it, we’ll check later if all was correct, but we need docker for this.

Docker and Docker Composer

Some requirements for docker

apt install apt-transport-https ca-certificates curl gnupg2 software-properties-common

and now we can add the repository for docker

curl -fsSL https://download.docker.com/linux/debian/gpg | apt-key add -

add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/debian $(lsb_release -cs) stable"

and now we can install it with

apt-get update apt-get install docker-ce

The Docker Composer which is shipped with Debian is too old to work with this docker, so we need following:

curl -L "https://github.com/docker/compose/releases/download/1.23.1/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose

and add /usr/local/bin/ to the path variable by adding

PATH=/usr/local/bin:$PATH

to .bashrc and calling it directly in the bash to get it set without starting a new bash instance. I know that a package would be better, couldn’t find one, so this is a temporary solution. If someone finds a better one, leave it in the comments below.

Now we need to check if the overlay stuff is working by calling docker info and hopefully you get also overlay2 as storage driver:

Containers: 0

Running: 0

Paused: 0

Stopped: 0

Images: 0

Server Version: 18.06.1-ce

Storage Driver: overlay2

Backing Filesystem: extfs

Supports d_type: true

Native Overlay Diff: true

Logging Driver: json-file

Bitwarden

Now we just need following:

curl -s -o bitwarden.sh https://raw.githubusercontent.com/bitwarden/core/master/scripts/bitwarden.sh

chmod +x bitwarden.sh

./bitwarden.sh install

./bitwarden.sh start

./bitwarden.sh updatedb

And now you’re done, you’ve your own password manager server which also supports Google Authenticator (Time-based One-time Password Algorithm (TOTP) as second factor. Maybe I’ll write a blogpost how to setup a Yubikey as 2FA (desktop and mobile) later.

QuickTip: Howto secure your Mikrotik/RouterOS Router and specially Winbox

October 6, 2018

I didn’t post anything about the multiple security problems in the Mikrotik Winbox API, as I thought that whoever is leaving the management of a router open to the Internet should not configure routers at all. Of course it is common sense to open the management interface only on internal network interfaces and to source IP addresses you’re managing the routers. But as this is quick tip I’ll show you how I configure my Mikrotiks for years.

/ip service

set telnet address=0.0.0.0/0 disabled=yes

set ftp address=0.0.0.0/0 disabled=yes

set www address=0.0.0.0/0 disabled=yes

set ssh address=10.7.0.0/16

set api disabled=yes

set winbox address=127.0.0.1/32

set api-ssl disabled=yes

As you see I’ve only enabled ssh and winbox and winbox is only listening on localhost. The ssh is protected with the Firewall to to be only reachable from my admin network. Also I disable the weak ciphers:

/ip ssh set strong-crypto=yes

And I’ve configured public key authentication for the ssh access. Now your question is how to access the router with winbox? Simple, use ssh port forwarding. So the Winbox API is only accessible by users that have a valid ssh logon – and ssh is much more robust and secure than Winbox. On Linux the port forwarding is done like this:

ssh -L 8291:127.0.0.1:8291 admin@<mikrotik>

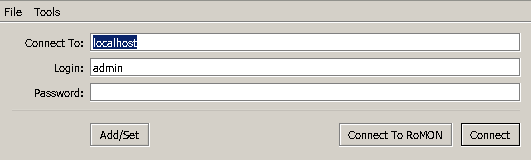

On Windows you can do that same with Putty. In Winbox just connect to localhost:

Some VPN providers leak your IPv6 IP address

August 10, 2018

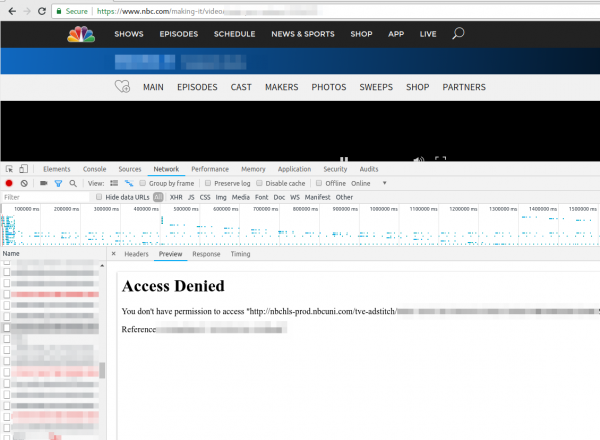

Just a short note. Today a friend called me if I could help him to get TV streaming from TV stations in the US running. When I looked at it, he even selected a VPN provider which offers servers in the US to circumvent the Geo restrictions, but still it didn’t work. He showed me the NBC website where the first ad was shown and than the screen stayed black. Having no experience with VPN providers and TV streaming sites I first checked the openvpn configuration and made sure that the routing table was correct (sending all non local traffic to the VPN). Looked good, so I opened the developer tools in the browser and saw following repeating.

Searching the Internet did not provide an answer … than I just tried to download the file with wget and I got following:

$ wget http://nbchls-prod.nbcuni.com/tve-adstitch/4421/xxxx-1.ts

--2018-08-10 19:20:20-- http://nbchls-prod.nbcuni.com/tve-adstitch/4421/xxxx-1.ts

Resolving nbchls-prod.nbcuni.com (nbchls-prod.nbcuni.com)... 2600:1406:c800:495::308, 2600:1406:c800:486::308, 104.96.129.98

Connecting to nbchls-prod.nbcuni.com (nbchls-prod.nbcuni.com)|2600:1406:c800:495::308|:80... connected.

HTTP request sent, awaiting response... 403 Forbidden

2018-08-10 xx:xx:xx ERROR 403: Forbidden.

Seeing this it hit me … its using IPv6 … so I did a fast check with

% wget -4 http://nbchls-prod.nbcuni.com/tve-adstitch/4421/xxxx-1.ts

--2018-08-10 19:20:30-- http://nbchls-prod.nbcuni.com/tve-adstitch/4421/xxxx-1.ts

Resolving nbchls-prod.nbcuni.com (nbchls-prod.nbcuni.com)... 104.96.129.98

Connecting to nbchls-prod.nbcuni.com (nbchls-prod.nbcuni.com)|104.96.129.98|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 242520 (237K)

So with a IPv4 request it worked. His VPN provider was leaking the IPv6 traffic to the Internet – that is potentially a security/privacy problem as many use a VPN provider to hide them! So make sure to check before relying on the VPN security/privacy.

How to configure a Mikrotik router as DHCP-PD Client (Prefix delegation)

February 6, 2018

Over time more and more IPS provide IPv6 addresses to the router (and the clients behind it) via DHCP-PD. To be more verbose, that’s DHCPv6 with Prefix delegation delegation. This allows the ISP to provide you with more than one subnet, which allows you to use multiple networks without NAT. And forget about NAT and IPv6 – there is no standardized way to do it, and it will break too much. The idea with PD is also that you can use normal home routers and cascade them, which requires that each router provides a smaller prefix/subnet to the next router. Everything should work without configuration – that was at least the plan of the IETF working group.

Anyway let’s stop with the theory and provide some code. In my case my provider requires my router to establish a pppoe tunnel, which provides my router an IPv4 automatically. In my case the config looks like this:

/interface pppoe-client add add-default-route=yes disabled=no interface=ether1vlanTransitModem name=pppoeDslInternet password=XXXX user=XXXX

For IPv6 we need to enable the DHCPv6 client with following command:

/ipv6 dhcp-client add interface=pppoeDslInternet pool-name=poolIPv6ppp use-peer-dns=no

But a check with

/ipv6 dhcp-client print

will only show you that the client is “searching…”. The reason for this is that you most likely block incoming connections from the Internet – If you don’t filter –> bad boy! :-). You need to allow DHCP replies from the server.

/ipv6 firewall filter add chain=input comment="DHCPv6 server reply" port=547 protocol=udp src-address=fe80::/10

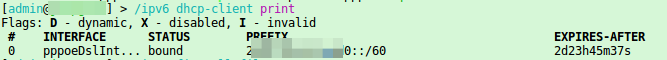

Now you should see something like this

In this case we got a /60 prefix delegated from the ISP, which counts for 16 /64 subnets. The last step you need is to configure the IP addresses on your internal networks. Yes, you could just statically add the IP addresses, but if the provider changes the subnet after an disconnect, you need to reconfigure it again. Its better configure the router to dynamically assign the IP addresses delegated to the internal interfaces. You just need to call following for each of your internal interfaces:

/ipv6 address add from-pool=poolIPv6ppp interface=vlanInternal

Following command should show the currently assigned prefixes to the various internal networks

/ipv6 address print

Hey, IPv6 is not that complicated. 🙂

Tips / Solutions for settings up OpenVPN on Debian 9 within Proxmox / LCX containers

September 21, 2017

When I tried to migrate my OpenVPN setup to a container on my new Proxmox server I run into multiple problems, where searching through the Internet provided solutions that did not work or were out of date. So I thought I put everything one needs to setup OpenVPN on Debian 9 within a Proxmox / LXC container together in one blog post.

Getting a TUN device into the unprivileged container

As you really should run container in unprivileged mode the typical solutions with adding/allowing

lxc.cgroup.devices.allow: c 10:200 rwm

won’t work. And running a container in privileged mode is a bad bad idea, but gladly there is a native LXC solution.

Stop the container with

pct stop <containerid>

Add following line to /etc/pve/lxc/<containerid>.conf

lxc.mount.entry = /dev/net/tun dev/net/tun none bind,create=file

start the container with

pct start <containerid>

OpenVPN will now be able to create a tun device. Just do a test run with

openvpn --config /etc/openvpn/blabla.conf

Add OpenVPN config files to the “autostart”

You need to put the OpenVPN files into /etc/openvpn/ with the extension .conf. And if you add a new file you need to run

systemctl daemon-reload

before doing a service openvpn restart.

Changes in existing config files don’t need the systemd reload.

Getting systemd to start openvpn within a unprivileged container

So OpenVPN works now manually but not with the “init” script. You see following error message in the log file

daemon() failed or unsupported: Resource temporarily unavailable (errno=11)

To solve this edit

/lib/systemd/system/[email protected]

and but a # in front of

LimitNPROC=10

now reload systemd with

systemctl daemon-reload

and it should work.

Hope that info/tips helped you to solve the problems faster than I did. 🙂 If you know some other tips / solutions for running OpenVPN in a Debian 9 container withing LXC / Proxmox write a comment! Thx!

Powered by WordPress

Entries and comments feeds.

Valid XHTML and CSS.

34 queries. 0.065 seconds.